Insurance operations involve high-volume workflows and high-stakes decisions. Underwriters review long submissions, claims teams sort through documents and photos, and customer service handles the same questions all day. That’s where artificial intelligence in insurance is starting to make a real difference. AI can help teams triage faster, spot risks earlier, and keep customer support responsive. Generative AI in the insurance industry adds value to language-intensive tasks, such as summarizing claim files, extracting key details from policies, and drafting clear updates for policyholders.

In this article, we’ll walk through the most common AI use cases in insurance, including newer agentic approaches. We’ll also cover the benefits of AI in insurance, the risks, and the practical governance steps that help insurers use AI without losing control.

Evolution of AI in Insurance

Artificial intelligence refers to software that can learn from data and make decisions that usually require human judgment. In insurance, early AI was mostly rules and scoring models. It worked for basic automation, but it struggled when data was messy or situations were not “standard.”

Today, AI applications in the insurance industry look very different. Insurers use machine learning to spot patterns in messy, high-volume data. They use computer vision to review photos from claims. They use language models to pull key details from policies, medical records, and long claim files, then summarize what matters.

AI now supports underwriting, speeds up claims, flags suspicious activity, and helps customer teams respond faster. It can also support more tailored pricing and coverage by using signals from connected devices like telematics, wearables, and smart home sensors.

Generative and Agentic AI

Generative AI took insurance AI past simple scoring and “yes/no” predictions. It can work with language, which is why it’s useful for everyday tasks like summarizing a claim file, drafting a customer update, or pulling key facts from policies and medical records. It also saves time when the information you need is scattered across emails, PDFs, call notes, and different systems.

Agentic AI is starting to show up in insurance teams that want fewer handoffs and more consistent workflows. Instead of producing a single answer, agentic systems can plan and execute a sequence of actions. For insurers, this can mean handling workflows such as intake, document checks, status updates, and routing, while still keeping humans in control for sensitive decisions. The core value is less about “automation for automation’s sake” and more about reducing handoffs and keeping processes consistent.

Adoption and Growth

AI in insurance is moving past pilot projects and into everyday work. Many carriers begin with practical use cases like document extraction, chat support, or claims triage because they are easier to roll out and manage. Once those early wins prove out, teams start connecting the tools into end-to-end workflows and strengthening their data foundation so the models stay consistent, reliable, and ready for audits.

This shift is happening for practical reasons. Customers expect faster service and clearer communication. Operations teams feel pressure to do more with less. Risk is also harder to manage as new data sources and new threats emerge. AI can help, but it only pays off when it is backed by strong data discipline, solid governance, and process changes that make sense in real workflows.

Key AI Applications in the Insurance Industry

AI shows up in insurance where teams deal with high volume, tight timelines, and complex decisions. Instead of replacing core roles, it often works as a “second set of eyes” that helps underwriters, claims adjusters, and customer teams move faster and stay consistent. Below are the main areas where AI typically brings the most value and what it actually does in day-to-day operations.

Underwriting and Risk Assessment

Underwriting involves evaluating risk and pricing policies. Traditional underwriting is labor‑intensive and can take days or weeks per customer. With AI in auto insurance and life insurance, generative AI models parse large documents and produce “decision‑ready” risks in minutes.

According to a report, generative AI can reduce average underwriting decision time from 3-5 to 12.4 minutes for standard policies while maintaining a 99.3% accuracy rate. For complex policies, AI reduced processing times by 31% and improved risk‑assessment accuracy by 43%. Risk digitization platforms, such as Cytora and Google Cloud’s Vertex AI, automatically parse information from disparate sources, enabling underwriters to tailor policies to applicant needs while adhering to company protocols.

Claims Processing and Loss Control

AI significantly speeds up claims processing and reduces costs. McKinsey cites Aviva’s deployment of more than 80 AI models in its claims domain, which cut liability assessment time for complex motor claims by 23 days and improved routing accuracy by 30%. The transformation saved Aviva over £60 million (about $82 million) in 2024.

Beyond headline savings, AI enables claims teams to move faster on routine tasks, including intake, document handling, and routing. In loss control, it can prioritize inspections and flag early risk signals from photos, sensors, or adjuster notes before a small issue turns into a large loss.

Customer Communications and Personalization

Modern policyholders expect seamless digital interactions, and AI in customer communications in the insurance industry delivers. Chatbots were initially used to answer basic questions, but improvements in AI now allow consumers to apply for policies, pay bills, and file claims via virtual assistants.

The Michigan Bar Journal notes that by 2022, more than 40 insurers had integrated chatbots into their daily operations. Across industries, many experts expect that AI multi‑agent systems will soon handle entire onboarding workflows, from intake to risk profiling and compliance checks.

AI for Fraud Detection in Insurance

Insurance fraud is a substantial challenge for insurers and honest customers. AI helps by spotting suspicious patterns that are easy to miss when claim volumes are high and information is spread across different systems. It can flag claims that look inconsistent, oddly repetitive, or unusually inflated, so investigators can focus on the cases that truly need a closer look.

But fraud models need careful handling. If they learn from biased or incomplete data, they can end up flagging the wrong people more often. That’s why insurers should establish clear guardrails: ethical rules, regular bias testing, and human review for cases where an AI signal could alter how a claim is processed.

AI in the Health Insurance Industry

In health insurance, AI enhances underwriting and claims while supporting preventive care. Wearable devices, smart home sensors, and telehealth platforms feed data into AI algorithms. These systems customize coverage options and automate the underwriting process, allowing flexible plans to fit individual needs. For example, sensors in insureds’ homes collect data to tailor policies and detect risks such as flooding before damage occurs. AI also helps spot fraudulent health claims and supports case managers in prioritizing high‑risk patients. As generative AI becomes more capable, it will allow insurers to explain complex medical policies in plain language and provide empathetic support to policyholders.

AI in Auto Insurance

Telematics and connected-car data have changed auto insurance. With a driver’s consent, insurers can use data from a vehicle or mobile app to understand driving habits, like braking, speed patterns, and mileage. AI helps make sense of those signals and build a clearer risk profile, which can support more personalized premiums. It can also help speed up claims. When a crash is detected, the same data can trigger an automated first notice of loss and start the claims process sooner.

AI in Property and Casualty Insurance

In property insurance, AI often works best when it is paired with smart home or building systems. Sensors can spot early warning signs like a leak, smoke, or unusual temperature changes. AI helps turn those signals into a clear alert, so a small issue gets handled before it turns into a costly claim.

After an event, AI can also help teams move faster by sorting damage information, grouping similar cases, and supporting quicker estimates. For casualty lines, computer vision can review photos of things like vehicle damage or storm impacts and help create repair estimates that are faster and more consistent than a fully manual review.

Compliance and Governance Challenges

AI in insurance can enhance speed and accuracy, but it also raises genuine concerns about compliance, privacy, and ethics. In practice, insurers typically face three key pressures: regulators expect clear explanations, customers demand data protection, and internal teams require a reliable method for managing models once they’re in production.

Regulatory Compliance

AI tools often process large volumes of personal data. That puts extra pressure on privacy and security controls. Insurers also need to manage two common regulatory expectations:

- Fairness and non-discrimination. Models should not create biased outcomes for certain customer groups. Teams need bias testing, balanced training data, and clear escalation paths when the model behaves unusually.

- Transparency and explainability. Regulators may expect insurers to explain how an AI system reached a decision, especially in underwriting and claims. Clear explanations help build trust and reduce dispute risk.

Ethical Considerations

Ethical concerns usually show up before the rules are fully defined. That’s why insurers should be clear about who is accountable for AI-driven decisions and keep human oversight in place for high-impact calls, like coverage changes, claim denials, or fraud flags.

Governance and Continuous Monitoring

Insurers and intermediaries need a lifecycle approach that covers how models are built, tested, approved, deployed, and reviewed over time. Ongoing monitoring matters because data changes, customer behavior shifts, and models can drift if no one is watching.

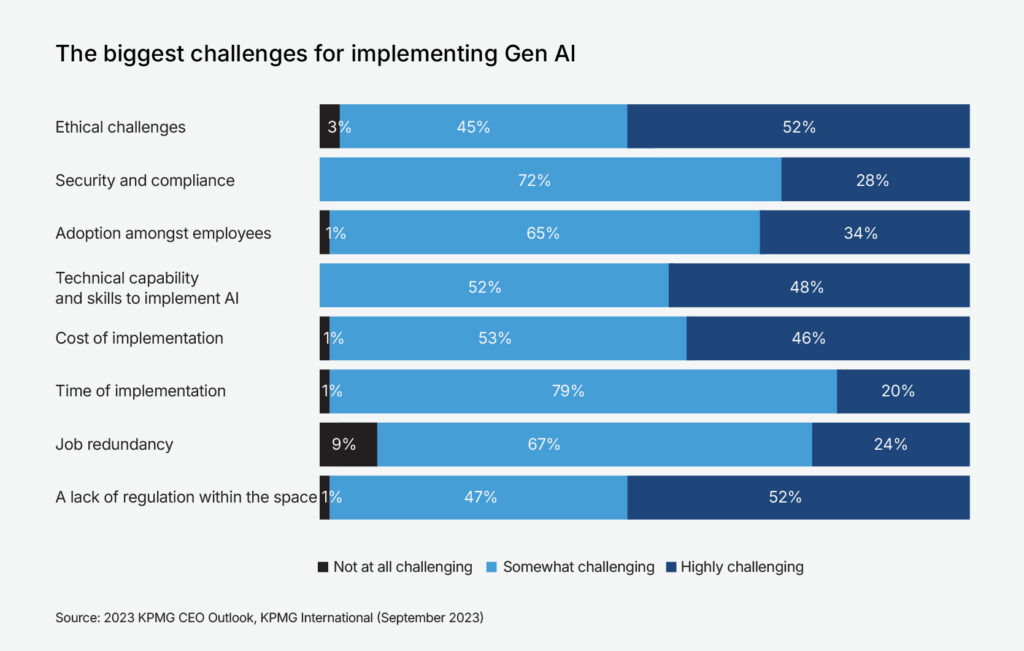

Public AI failures make some teams cautious about scaling without stronger guardrails. KPMG’s 2023 Insurance CEO Outlook points to ethics and regulatory uncertainty as key hurdles for adopting generative AI.

Best Practices for AI Compliance in Insurance

As insurers scale artificial intelligence in insurance, “best practice” usually means two things: you can explain your decisions, and you can prove your controls work. These steps help you get there.

Data Governance

Data governance is where AI compliance usually starts. Here are a few tips insurers use to protect data, reduce risk, and keep decisions auditable:

- Define data ownership and access. Assign owners for key datasets (claims, underwriting, customer comms) and restrict access by role.

- Control quality at the source. If your inputs are inconsistent, your model outputs will be too. Build validation rules, standard definitions, and data lineage, so teams know what they’re using and where it came from.

- Privacy by design. Apply minimization (collect only what you need), retention limits, encryption at rest/in transit, and clear consent rules. For model training, consider anonymization/pseudonymization and keep sensitive fields out unless there’s a strong reason.

- Third-party data checks. If you buy data (telematics, credit, behavior signals), document what it is, how it’s sourced, and whether it creates unfair outcomes for certain groups.

Transparency and Explainability

Transparency pays off when decisions get challenged. Here are a few steps that help insurers stay ready for audits and disputes:

- Pick the right level of explainability. Not every system needs deep model interpretability, but underwriting and claims decisions often require a clear rationale.

- Log the decision path. Store key inputs, model version, confidence score, and the top drivers behind an outcome. This is what supports audits and customer inquiries later.

- Use plain language outputs. Build “reason codes” or structured explanations that customer teams can share without guessing.

- Separate recommendations from decisions. When possible, let AI suggest options and keep the final decision rule-based or human-approved in sensitive workflows.

Human Oversight

Here are a few practical ways to keep humans in the loop where AI decisions carry real risk:

- Human-in-the-loop for high-impact actions. Keep human review for claim denials, fraud escalations, cancellations, and any underwriting decision that materially affects price or coverage.

- Clear escalation rules. Define what triggers manual review (low confidence, unusual pattern, missing data, protected-class risk signals, customer complaint).

- Accountability by role. Name who can override AI outputs, who reviews exceptions, and who owns the outcome if something goes wrong.

- Training and playbooks. Customer service, adjusters, and underwriters should know how to interpret AI suggestions and when to challenge them.

Governance and Continuous Monitoring

Governance is what keeps AI from turning into a hidden risk. Here are a few steps to manage models after launch:

- Model lifecycle controls. Document how models are built, tested, approved, deployed, and updated. Track versions and keep a change log.

- Bias and fairness testing. Test outcomes across relevant segments, monitor for unequal error rates, and re-train or adjust features when issues appear.

- Performance monitoring. Watch for accuracy drops, false positives in fraud detection, and adverse customer impacts (complaints, disputes, escalations).

- Regular audits. Schedule internal reviews and, when necessary, conduct independent audits, particularly for underwriting and claims automation.

Security and Vendor Management

Most AI risk enters through vendors and integrations. Here are a few checks that help keep data and workflows protected:

- Secure the full pipeline. Protect training data, model endpoints, and integrations the same way you protect core policy and claims systems.

- Vendor due diligence. For GenAI tools, clarify where data goes, whether it is used for provider training, how it’s stored, and how you can delete it.

- Prompt and output controls. Set guardrails to prevent leakage of personal data, internal pricing logic, or sensitive claim details.

Stakeholder Engagement

Stakeholder alignment is part of compliance. Here are a few ways to keep expectations clear and avoid surprises later:

- Engage regulators and industry bodies. If you’re introducing automated decisions in underwriting or claims, involve compliance early and keep documentation ready.

- Prepare customer-facing messaging. Customers want to know what AI is doing and how to challenge a decision.

- Align internally. Legal, compliance, underwriting, claims, and IT should share the same definitions of “acceptable risk” and “explainable decision.”

Conclusion

The insurance industry is on the cusp of an AI-driven transformation. Artificial intelligence in insurance enhances underwriting, accelerates claims processing, reduces fraud, and creates personalized customer experiences. Adoption is accelerating, and market projections suggest AI will become essential to competitive insurance operations.

Still, real results depend on more than the model. Insurers need strong data quality, modern infrastructure, ethical governance, and skilled teams. The safest path is to start with high-impact use cases that deliver quick wins, then scale using reusable components and clear controls.

If you want a practical roadmap for AI in insurance, use cases, architecture, governance, and rollout, contact us. Svitla Systems supports insurers from discovery and prototyping to production deployment and ongoing optimization.