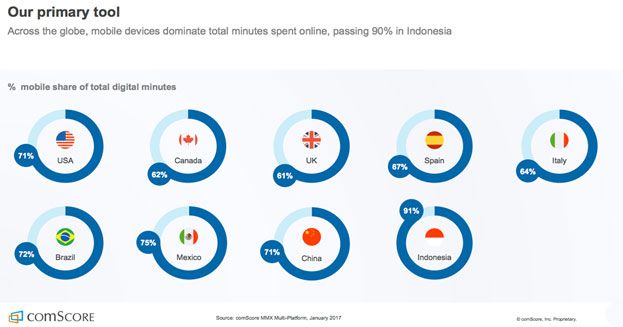

Marketing experts continue to focus their attention on the latest mind-boggling predictions for the mobile application market. For instance, the comScore 2017 report shows that the time our society spends online via mobile devices continues to climb, with countries like Italy and Indonesia consuming 61% and 91% of digital minutes via a mobile device, respectively. The following graphic illustrates how these statistics vary across the globe.

Retrieved from this source

These sky-high figures along with soaring mobile revenues, which are expected to reach 188.9 billion U.S. dollars by 2020, motivate many businesses to “go mobile.” However, these decision-makers must account for the challenges they may face in doing so, many of which are caused by insufficient testing.

Thanks to the demands placed on the mobile market and the requirement for quick responses to customers’ needs, low-quality applications earning millions are a thing of the past.

However, despite these factors, companies still often push for rapid mobile application delivery, perhaps underestimating the importance of mobile quality assurance. The value added through manual and automated testing includes consistency, good usability, and performance of the application, which all play a pivotal role in ensuring its success.

ABCs of mobile QA automation

Mobile QA automation is multi-purpose, as it is a good fit for both a ready-to-use product and one still in development. It speeds up testing across a myriad of mobile devices and platforms, increases test coverage, handles rote tasks, and increases the probability of catching bugs while coding.

Even though writing test scripts may take time, the effort put forth pays off as one hour required for test creation may save up to 7-8 hours spent on development. When writing a program, code errors do occur, which is why tech experts at Svitla recommend covering code with unit tests, even in the early stages of development. This allows software teams to reduce bugs from build to build and cut down on regression tests.

Furthermore, QA engineers should resist the temptation to automate nearly everything. If some functionality changes instantly, deploying manual QA testing is certainly a better strategy to choose.

Prior to testing, researching the user personas as well as the intended uses of the application will be vital to its success. After this step, QA teams may then spend time reviewing the QA automation strategy as a whole and selecting the most laborious tasks to be automated. By doing so, team leads can more accurately estimate the time needed for the creation of the test infrastructure, allowing them to use their labor resources more effectively and efficiently.

The road to following the best QA practices may not always be smooth. Scroll down to explore what challenges may arise along the way and how best to respond to them.

QA best practices & challenges

Surprisingly, bottlenecks in test automation are often caused by poor objectives setting. Before creating another test set, one should first define the goals and possible outcomes of the test. Ignoring this step can result in inaccurately estimating one’s automated testing efforts, failing to properly define the cost and time involved. However, with careful planning in place, the QA team can more successfully avoid writing scripts for functionality that may be out-of-date.

Ensuring that the application is usable on different types of popular mobile devices popular is another important strategy to consider. Because manufacturers introduce configuration frequently and device fragmentation is rampant, Svitla’s QA automation engineers suggest deploying device-specific policies. These will make sure the application works well on the reference devices first so that engineers can then proceed with testing on other devices popular among end-users.

Another obstacle which undermines the application’s dependability is access to input, output, and storage devices (such as the digital camera, microphone, fingerprint scanner, and various sensors which detect the device shaking, tilting, etc). When mobile phone emulators become of no help, the most logical solution is to use real devices.

In QA automated testing, teams need to consider what UI elements will change continuously in order to avoid rewriting and rescheduling releases. In addition to this, automated QA testing can be far more complicated with the inclusion of custom UI elements that are tested only via custom libraries, which can be quite labor-intensive. Therefore, QA automation engineers at Svitla advise developing tests only for common usability flows to prevent regular test updates and reviews.

Another challenge team leads face is running automated tests quickly and simultaneously. Cloud-based services like Amazon and Azure or tools like Jenkins and Docker, which allow one to build an automated deployment pipeline remotely, are critical to ensure scalability of the test environment. Here, QA team leads should carefully manage test infrastructure assets in order to avoid running out of budget as well as select the proper QA automation tools which will allow minimum setup.

Automation frameworks for Android and iOS

QA automation frameworks do not evolve as rapidly as the mobile application market. In fact, many of the existing tools, which claim to be the best in QA automation and which carry a higher price-tag, actually have similar architecture and building blocks as their lower-priced counterparts. Therefore, when selecting QA automation tools, testers usually focus on topics ike customization, setup requirements, technologies used, operating systems (OSs) supported, script modification feature availability, and community support.

Among the most popular automated test frameworks, there are Appium (Android and iOS), Robotium (Android), and Calabash (Android and iOS).

Appium

An open source test automation framework actively supported by the testing community, Appium, supports hybrid, native, Android and iOS applications and has extensive and useful technical documentation. One of the framework’s biggest advantages is a wide-ranging choice of test programming languages (Python, C#, Ruby, Java, and more), and in addition to that, Appium does not require lots of code changes, saving precious time during testing.

Pros:

- Runs cross-platform tests

- Supports continuous integration

- Syncs with other frameworks

- Does not need to access source code

- Quickly adopts changes

Cons:

- Initial setup is quite laborious

- Can be launched only on Mac machines or servers

Robotium

This automation test framework, Robotium, also belongs to the list of open-source tools. Useful for recording hybrid and native Android tests, it runs black-box UI tests well while supporting both native and hybrid applications. Similar to Appium, this framework also supports all API levels, and because it is maintained by Google, Robotium has an active community of contributors.

Pros:

- Allows writing test cases even without knowing the application well

- Captures screenshots at any stage of testing

- Manages a few testing activities simultaneously

- Runs tests very fast

Cons:

- Can handle tests only for one application at the same time

- May fail to test the application that requires using camera or other input/output devices

Calabash

Maintained by Xamarin, this mobile automation framework is also free and open source and is said to quickly absorb technological innovations. Calabash is equally good for Android and iOS native applications as it has separate libraries for each.

Pros:

- Allows running tests on real devices

- Favors Behavior Driven Development (BDD)

- Gets instantly updated, thanks to its contributors’ support

- Integrates with Cucumber, and therefore, uses simple English in test statements

Cons:

- May skip some essential test steps as a result of test failure

- Supports only Ruby

- Requires a lot of time to complete tests

Types of mobile testing: manual vs automated

In addition to a careful QA automation tool selection, it is critical to define what is subject to automation and manual testing in QA. The right choice may not always be obvious due to application complexity and technology restrictions. To help clarify, we have collected a few suggestions from Svitla’s team of experts on the most commonly performed test types.

Memory leakage testing. If applications experience difficulty managing memory, the overall performance decreases. In this case, both manual and automated QA tests should be performed to obtain accurate results.

Functional testing. As a rule, user interface and app features undergo dynamic changes, which means that functional testing proves to be more effective when run manually. However, all repeated user stories should be covered by automated tests.

Performance testing. Load, volume, recovery or stress test cases require a large data set and preparation. Hence, performance testing should be automated.

Usability testing. Testing application usability should only be run manually to acquire feedback from real users and to understand whether or not the application can meet the expectations of its potential users.

Bottom line

Automated QA testing is absolutely necessary when performing tests repeatedly, collecting multiple data sets, checking functionality performance, or trying to reduce human error. The success of a test’s execution largely depends on how well the test syncs with the development process itself. For this reason, we can expect that mobile QA will continue to integrate with development, and rather than teams using code-intrusive tools, they will instead deploy QA testing best practices that will work well in Agile-driven environments.