Article summary: Global AI spending hit $2.52 trillion in 2026, but a PwC survey of CEOs found that 56% have seen neither increased revenue nor decreased costs from their AI investments. AI cost optimization has become the defining challenge for organizations that have spent the past two years experimenting and refining their approaches. This article explores where AI budgets are allocated, why cloud and infrastructure costs escalate under AI workloads, what sets the small group of companies achieving real returns apart, and provides practical strategies for AI ROI and AI infrastructure cost optimization.

AI cost optimization was not a priority when organizations rushed to adopt generative AI in 2023 and 2024. The priorities were: getting in, hiring ML engineers, setting up pilots, and building proof-of-concept demos that impressed the board. Cost control could wait.

Well, it can't wait anymore.

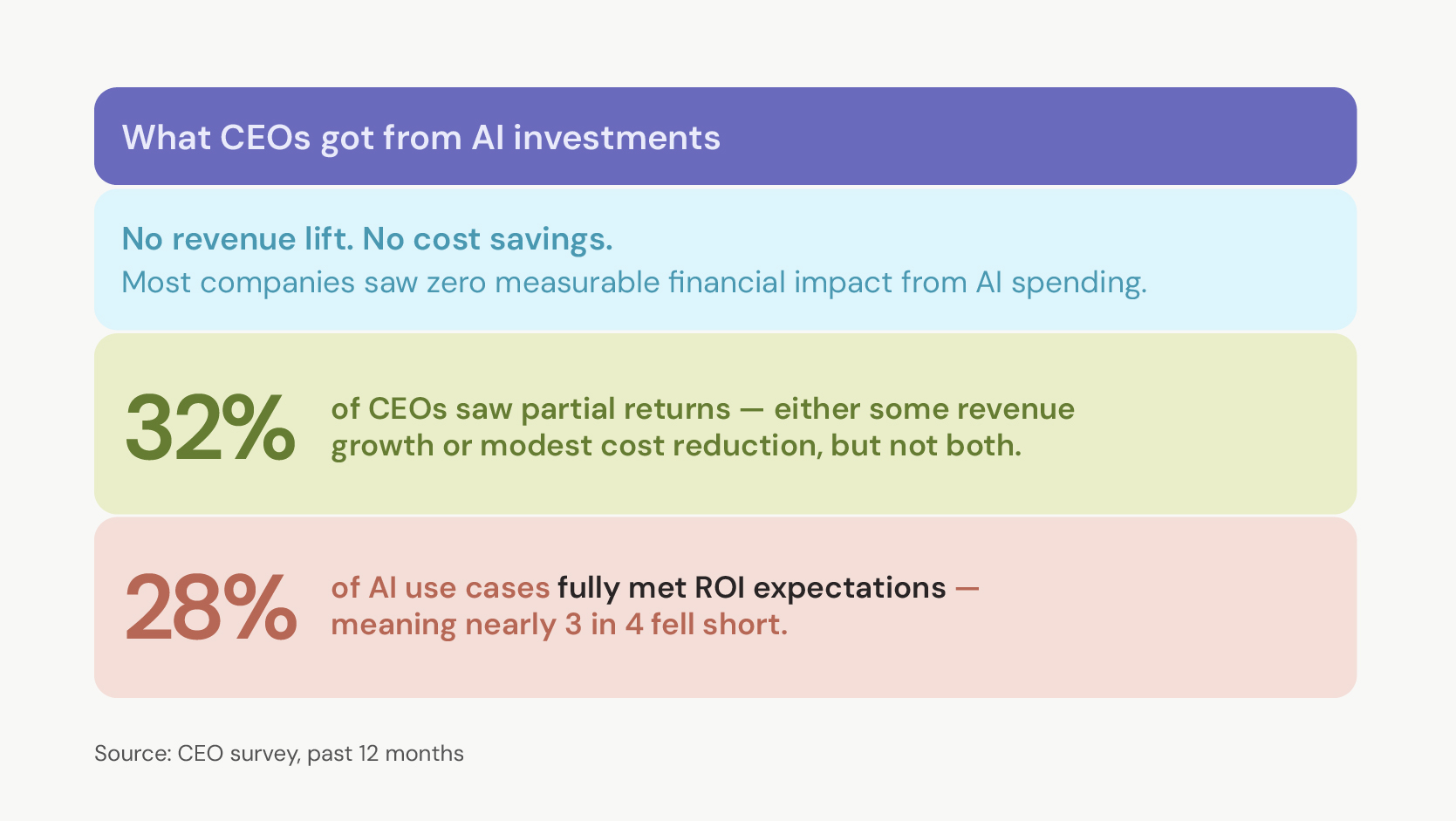

Global AI spending will reach $2.52 trillion by the end of 2026, according to Gartner. Cloud spending crossed $1 trillion for the first time in 2026, with AI workloads driving much of the growth. And the returns? PwC's 2026 Global CEO Survey found that 56% of CEOs saw no increase in revenue or decrease in costs from AI over the past twelve months. Only 12% achieved both.

Organizations that treated AI as a race to adopt are now paying the price. Without cost governance, AI ROI measurement, and infrastructure planning, budgets grow faster than the value they produce. A 2026 Gartner survey found that only 28% of AI use cases meet ROI expectations, 20% fail outright, and the remaining 52% fall short of delivering what was promised.

A small minority, between 5 to 12% of organizations, are identified by McKinsey and BCG as "AI high performers," and they share a similar pattern. They invested in AI coupled with governance, infrastructure planning, and ROI accountability to make those investments sustainable. This article covers those strategies, starting with where the money is going and why it's not coming back for most organizations.

Learn about the most promising opportunities for growth and where AI is making a positive impact financially.

Where is the money going?

The five largest hyperscalers (Amazon, Alphabet, Microsoft, Meta, and Oracle) are collectively forecasted to exceed $600 billion in capital expenditure by the end of 2026, with roughly $450 billion allocated directly to AI infrastructure (servers, GPUs, data centers, and cooling systems).

In 2023 and 2024, the AI cost story centered on training, building larger models, and purchasing more GPUs.

Today, inference (running trained models to generate real-time outputs) accounts for 55% or more of AI infrastructure spending, up from 33% in 2023. Deloitte's analysis puts inference at 66% of all AI compute load. The number keeps climbing because inference, unlike training, never stops. A model gets trained once, but it is queried thousands or millions of times a day, every day, for as long as it remains in production.

An agent in production doesn't make one inference call and stop. It reasons, plans, calls tools, evaluates results, and iterates, sometimes dozens of times per task. Each loop is another inference call.

Some enterprises already spend tens of millions of dollars a month on AI, largely due to continuous inference. API-based LLM tools that work for proof of concept become financially unsustainable when deployed across real business operations.

All of this creates a cost pattern that surprises finance teams accustomed to traditional software budgets. Training is a fixed, one-time expense, while inference is an ongoing, variable cost that scales with usage.

GPT-4's training reportedly cost around $150 million, but its inference costs reached an estimated $2.3 billion within two years, roughly 15x the training investment. Per-token costs have dropped 280-fold over the past two years, but usage has grown even faster. Organizations are paying less per query and spending more in total.

The breakdown of enterprise AI infrastructure cost challenges falls into a few categories.

- Compute is the largest, particularly in terms of GPU costs. For example, an NVIDIA H100 on AWS runs roughly $3.90 per GPU per hour on demand, a rate that reflects the 44% price cut AWS made in mid-2025 as supply caught up with demand. An 8-GPU cluster on AWS or Azure runs $31 to $33 per hour at on-demand rates, dropping below $16 per hour with a one-year Savings Plan.

- Then comes data storage and movement. AI systems require massive datasets, and transferring data between regions, services, or clouds generates egress fees that can add 20-40% to monthly AI infrastructure costs.

- Platform licensing, managed services, and monitoring tools add another layer of cost challenges.

- Hidden costs also compound: failed experiments that restart without checkpointing, idle GPU instances left running between jobs, and teams provisioning the largest available instance because nobody set guidelines about right-sizing.

Why most organizations don’t see AI returns?

Most organizations invested in AI before they even built ways to measure whether AI was working. Deloitte's survey found that only 6% of organizations saw AI ROI payback in under a year. The typical payback period is two to four years. For context, the normal expected payback for technology investments is seven to twelve months.

AI takes two to four times longer to show returns than most other technology bets. When one executive in the Deloitte study was asked about the disconnect between investment and outcomes, the answer was candid:

"Everyone is asking their organization to adopt AI, even if they don't know what the output is."

The same three problems keep popping up.

- The pilot-to-production gap. Most AI projects start as proof-of-concept. They work in a controlled environment with clean data and a limited scope. Moving from pilot to production requires integration with existing systems, data pipelines, security and compliance reviews, monitoring infrastructure, and organizational change management. Many organizations fund the pilot but underestimate the cost of everything that follows. Forrester warns that organizations are pushing up to 25% of planned AI spend to 2027 by organizations that failed to show ROI in the first half of 2026. This is a clear sign that the pipeline from experiment to production remains badly clogged.

- Measuring the wrong things. Organizations that track AI success by adoption metrics (how many employees use the tool, how many queries the model handles, how fast the pilot was built) often can't connect those numbers to business outcomes. Only 33% of AI initiatives meet ROI targets, according to Salesforce research. Part of the problem is that the metrics most teams use don't map to the ones the CFO cares about: revenue impact, cost reduction, margin improvement, and time to market. McKinsey found that over 80% of organizations report no meaningful impact on enterprise-wide earnings before interest and taxes (EBIT) from AI, even when individual projects show productivity gains.

- No one owns the outcome. AI initiatives often sit in a governance vacuum. As the Deloitte data shows, only 10% of organizations have CEO-led AI agendas, yet those that do are twice as likely to see meaningful EBIT improvement.

Johann Beukes, Chief AI Officer at Svitla Systems, has seen this pattern firsthand: "The assumption is that once it works in a demo, it's ready. In reality, that's when the work begins."

What follows the demo is rarely a smooth path to production. It's a collision with the things nobody budgeted for: data pipelines, security reviews, monitoring infrastructure, and a change management process. The organizations stuck in "pilot purgatory" aren't there because the technology failed. They're there because they built the model without building the measurement, governance, and infrastructure around it.

How AI workloads turn cloud costs unpredictable

Cloud cost models that worked for traditional software break under AI workloads. Traditional cloud costs are relatively predictable, while AI workloads are erratic.

As covered earlier, inference now dominates AI compute spending. But the way inference costs accumulate is what makes them dangerous to underestimate. A proof-of-concept chatbot serving 100 internal users costs very little. The same model deployed to 50,000 customers generates a bill that grows linearly with every conversation. Agentic workflows multiply the problem because each agent task triggers multiple inference calls as the system reasons, evaluates, and iterates. Because costs ramp gradually alongside usage, they often go unnoticed until they become a serious problem.

Data movement costs are the hidden surcharge. GPU compute gets most of the attention, but data transfer fees quietly add 20 to 40% to monthly AI infrastructure costs. AI systems move large volumes of data between storage, compute instances, regions, and services.

On AWS, a team transferring 10TB per month in API response data pays roughly $900 per month in egress fees alone, before a single compute cost is counted. Cross-region replication for disaster recovery, data syncing between training and inference environments, and model storage all generate transfer costs that rarely appear in budgets. Most organizations don't discover the scale of data movement costs until they're already embedded in the billing pattern.

AI infrastructure costs are more exposed to hardware market shifts than traditional cloud costs. Since late 2025, DDR5 memory costs have increased by 307% due to supply chain constraints and AI-driven demand. NAND flash prices rose 33 to 38%. Cloud providers have started passing these costs through: general workload price increases are expected by mid-2026, with steeper hikes for memory-heavy services.

One financial services firm saw its ElastiCache costs rise 22% in a single quarter due to memory pricing adjustments alone. Static annual budgets, the kind that work for traditional infrastructure planning, can't absorb these shifts without constant recalibration.

The organizations getting cloud costs under control are turning to FinOps, the discipline of bringing financial accountability to cloud spending. FinOps has matured rapidly in the past two years. 98% of FinOps practitioners now manage AI spending, up from 63% in 2025.

Organizations using FinOps frameworks are 2.5x more likely to meet or exceed cloud ROI expectations. Structured FinOps programs consistently deliver 25 to 30% reductions in monthly cloud spend.

But FinOps practitioners themselves report that the easy wins are gone. As one practioner noted in the 2026 State of FinOps report:

"We have hit the 'big rocks' of waste and now face a high volume of smaller opportunities that require more effort to capture."

Many organizations are now self-funding their AI investments through cloud optimization savings. The logic creates a trap: to fund AI, teams cut cloud waste, but running AI drives cloud spend back up. Breaking out of that cycle requires treating cost governance as a design constraint from day one rather than a cleanup exercise.

Who are the top 12% who get ROI do differently

Across McKinsey, BCG, and PwC's research, the same structural finding keeps surfacing: a narrow group of 5 to 12% of enterprises captures most of the value from AI. McKinsey calls them "AI high performers," BCG identifies 5% as "future-built" enterprises, and PwC labels 12% the "AI Vanguard." While the labels differ, the findings don't. What separates this group from the rest is how they organized their infrastructure around AI.

The struggling organizations follow a recognizable pattern:

Leadership mandates AI adoption → a team builds a proof of concept around an available LLM → the demo impresses executives → the project stalls because nobody planned for integration, monitoring, or measurement.

In contrast, high performers focus on specific business processes where costs or error rates are already well-understood. By choosing use cases with established baselines, these organizations can meaningfully measure AI ROI and demonstrate measurable improvements compared to previous performance levels.

McKinsey identifies workflow redesign as the top predictor of AI value capture, with high performers 3x more likely to have rebuilt processes from scratch rather than layering AI on top of existing ones. The instinct is to plug a tool into the current process and see if things improve.

The organizations getting returns rethink the process entirely. A customer service team doesn't just add a chatbot to the existing queue. It redesigns intake, triage, and resolution around what the agent can handle autonomously and what requires a human input.

Measurement is where most organizations quietly fail. Deloitte’s 2026 data shows that 66% of organizations claim productivity and efficiency gains from AI, but only 20% are generating revenue growth.

The high performers define KPIs before deployment: tickets resolved per hour, cost per transaction, time to first response, and error rate reduction. They connect model performance data to business outcome metrics. BCG's recommended resource split reflects this: 10% on algorithms, 20% on technology, and 70% on people and process transformation. Most organizations invert that ratio, then wonder why the returns don't show up.

Also, high performers build the same FinOps discipline that controls cloud costs directly into their deployment process from the start. They track cost per inference call, cost per resolved ticket, and cost per business outcome rather than just aggregate cloud spend. They right-size GPU instances, schedule training jobs during off-peak hours, implement prompt caching for repeated queries, and automatically shut down idle resources.

The cost-returns gap will keep widening

The organizations pulling ahead aren’t better funded. They made different decisions earlier:

- Measure before deploying

- Rebuild workflows instead of patching

- Put a senior leader in charge of outcomes

None of that is technically difficult. Most of it is just discipline applied consistently, from the start, before the cloud bill made it urgent.

The window to close the gap is narrowing. Compounding returns and costs are making it increasingly difficult for late adopters to catch up. For every quarter the high performers run ahead, the structural advantage grows. The organizations that treat cost governance and ROI measurement as design constraints now are the ones that will be closing ground in two years. The ones waiting for a cleaner moment to start probably won’t be.

If that’s the problem you’re working on, Svitla’s AI and machine learning team builds the infrastructure, measurement frameworks, and cost governance that connect AI spending to results.